Table of Contents

1

Introduction

2

Object Detection

3

Regression-Based Object Detection (One-stage)

4

Classification-Based Object Detection (Two-stage)

5

Conclusion

6

Contributors

Introduction

Introduction

The ever-increasing advancement of computer vision applications in object detection for industrial applications has supported the growth in imaging data and the need to build an autonomous intelligent management system for this data. Understanding a complete image requires the precise detection of the object location in the image/ video, which has become an area of study for major companies. For example, the scope of computer vision has extensively researched in the area of detection for Automation, Consumer Packaged Goods (CPG), Medical Imaging, Military, and Surveillance. The market for computer vision applications is estimated to grow by more than $19.47 billion in 2020.

In object classification, the evolution of the convolutional neural network (CNN) has eliminated the manual features extraction, which is helpful in image classification. An unlimited data can be feed to CNN to produce valuable output due to its millions of learnable constants to estimate, which is more computationally expensive and results in the need for graphical processing units (GPUs) for model training. CNN extract features directly from images. These extracted features are not pre-trained, but they can learn while the network trains itself on collected images.

Deep learning models in computer vision are becoming highly accurate due to automatic feature extraction. The architecture of deep CNN comprises complex models, as these models need large, labeled image datasets to perform related tasks such as object classification, detection, object tracking, and recognition to achieve high accuracy.

With the progression in technology and the availability of powerful graphics processing units (GPUs), deep learning has been employed on large datasets, providing breakthrough outcomes to the research community in the field of object classification, detection, and recognition. Deep learning uses powerful computational resources to execute both the training and testing of large datasets. In computer vision, image classification is the most actively studied area and has accomplished astonishing results during the application of deep learning techniques in worldwide competitions through PASCAL, ILSVRC, VOC, and MS-COCO.

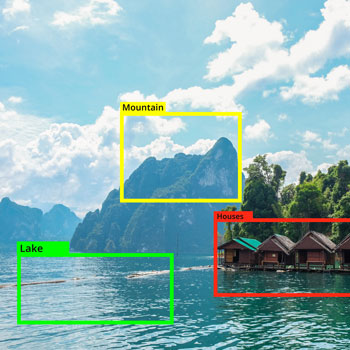

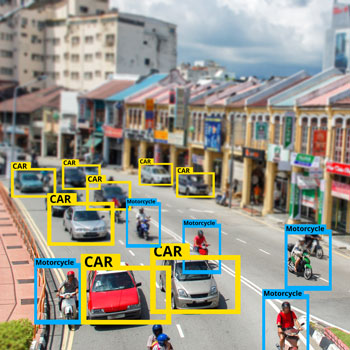

In object detection, the main aim is to determine any specified instance of objects from the target varieties such as crack, damage on the vehicles, or any other target to detect in an image using the trained model. Moreover, if present, then returns the spatial location and extent of a single object (by bounding box) with a specified accuracy on the detected object. Object detection techniques can collaborate with multiple technologies such as the internet of things (IoT), edge computing, and artificial intelligence in terms of applications. The above technology integration can develop intelligent retail store surveillance systems, intelligent manufacturing processes, autonomous cars, robot vision, human-computer interaction (HCI), consumer electronics, etc.

Image segmentation is an important area in the field of image processing and computer vision. The image segmentation involves partitioning images/ video frames into multiple segments/ objects, which can apply in various areas such as medical image analysis, real-time surveillance, scene understanding, intelligent robotic perception systems, and many more. Image segmentation can formulate as a classification problem of pixels with semantic labels (semantic segmentation) or partitioning of individual objects (instance segmentation). Semantic segmentation performs pixel-level labelling with a set of object categories (e.g., human, car, tree, sky) for all image pixels. Thus, it is generally considered as a hard undertaking than image classification, which predicts a single label for the entire image. Instance segmentation extends the semantic segmentation scope further by detecting and delineating each object of interest in the image (e.g., partitioning of individual persons). With the expansion of deep learning techniques in a wide range of computer vision applications, there is a lot of development in the image segmentation area. In the area of medical/ biomedical image segmentation, U-Net and V-Net are the two widely-used image segmentation models. The scope of the image segmentation study is limited in this whitepaper.

Deep learning is one of the most promising techniques in this decade and has resulted in significant developments in the object detection field. Real-time detection is one of the most challenging and studied areas in the field of object detection and tracking, as it can monitor, for example, products on a conveyor belt to detect damaged products. The diagram below represents the development of the various deep learning object detection algorithms in the last several years:

To attain high accuracy and high efficiency are the two important aspects of object detection needed by the detection algorithm to accomplish:

- The challenge to achieve accuracy is:

- A large range of intra-class variations includes factors such as color, texture, material, shape, size, etc.

- Image under unconstrained environments includes factors such as object appearance, such as lighting (dawn, day, dusk, indoors), physical location, weather conditions, cameras, backgrounds, illuminations, occlusion, and viewing distances, etc.

- Distortions: includes factors such as digitization artifacts, noise corruption, poor resolution, filtering distortions, etc.

- Unrecognized data includes the list of object categories on which the benchmark dataset is small compared to humans can recognize.

- The challenge to achieve efficiency is:

- The limitation of computational capabilities and storage space of the mobile/wearable device make efficient object detection critical.

- Need to localize and recognize thousands of images.

- Able to detect previously unseen objects, unknown situations, and high data rates.

- Annotation of images manually is becoming a challenge as the number of categories grows, resulting in weakly supervised strategies.

Object Detection

Object Detection

The main objective of object detection is to identify the undiscovered categories of the objects and utilize those in real-world applications such as farming, animal husbandry, self-driving, facial recognition, medical imaging, and many more in the images or real-time. The below diagram represents the overview of object detection and various methodology in detection.

The basic architecture of the convolutional neural networks (CNNs) shown in the below figure containing several layers with various functions. The convolutional layers are the key building blocks of a CNN. At each convolutional layer, the input data combines with a set of L learnable kernels, weights W = {W1, W2, WL} added by bias b = {b1,b2,…bL}. These kernels are also called receptive fields. Then a new features map XL is generated by inputting the convolutional results into an element-wise nonlinear function σ (.). The output vector for the first layer with the Lth feature map is calculated by XL = σ(WL*X +bL).

The function σ(.) is known as the activation function, and there are many activations functions available such as rectified linear function (ReLU), hyperbolic tangent function, or sigmoid functions. The pooling layer follows the convolutional layers to performs the nonlinear down-sampling. Many nonlinear functions can be utilized, but the commonly used is max-pooling. The max-pooling layer can partition the features map into a set of non-overlapping rectangles and for each such subregions and provide the maximum values in the output. In addition to max pooling, the pooling layers can also perform other nonlinear operations such as average pooling or the L2-norm pooling and a form of translation invariance. The pooling layer helps to reduce the spatial size of intermediate representations, the number of parameters, and the amount of computation in CNN architecture to control overfitting.

The high-level reasoning in CNN is implemented with fully connected (FC) layers after multiple convolutional and pooling layers. The units in the FC layers are fully connected to all activations in the previous layer. Hence, the computed activations consist of a matrix multiplication followed by a bias offset and output the classes or the probabilities. By calculating the loss between the predicted and true data, the CNN can be trained by an end-to-end method as the number of weights is reduced by convolutional and pooling layers. As per the application, there are different types of loss functions. Some of the loss functions heavily are sigmoid cross-entropy loss, Softmax loss, and Euclidean loss.

The paper has covered some commonly used deep-learning-techniques overviews, segregated further into one-stage detection (Regression-Based Object Detectors). Detection happens in one step approach and two-stage detection (Classification-Based Object Detectors), in which detection happens in a two-step approach. The computation speed is one of the main factors between the two methods.

Regression-Based Object Detection (One-stage)

Regression-Based Object Detection (One-stage)

- You Only Look Once (YOLO)

Redmon et al. from the University of Washington (go DAWGS!) have proposed the first outline of this fast real-time multi-object detection algorithm in 2015. YOLO is the strongest, fastest, and simplest object detection algorithm used in real-time object detection. YOLOv5 is the latest version launched with 140 frames per second (FPS) in a batch has achieved by running at Tesla P100. While YOLOv4 can achieve 30 FPS and YOLOv5 can achieve 10 FPS if the batch size is 1.The other object detection algorithms use regions to localize objects within the image, but the YOLO approach is entirely different. In Yolo, an entire image passes through a single CNN, where an image is divided into multiple regions, and for each region, it predicts bounding boxes and class probabilities.

- Single Shot MultiBox Detector (SSD)

The Single Shot MultiBox Detector method for detecting objects in images using a single deep neural network has proposed by Wei Liu. SSD is designed purely for real-time object detection in a deep learning era. It discretizes the output space of bounding boxes into a set of default boxes over different aspect ratios and scales per feature map location. During prediction time, the network generates scores for the presence of each object category in each default box and produces adjustments to all the boxes to better match the object shape. SSD eliminates proposal generation and subsequent pixel or feature resampling stage and encapsulates all computation in a single network.To improvise Fast R-CNN’s real-time speed detection accuracy, SSD eliminates the region proposal network (RPN). With a batch size of 1, the SSD300 method can achieve 74.3 mAP with a 46 FPS, and the SSD512 method can achieve 76.8 mAP with a 19 FPS. While a batch size of 8, the SSD300 method can achieve 74.3 mAP with a 59 FPS, and the SSD512 method can achieve 76.8 mAP with a 22 FPS. The drawbacks of SSD: at the cost of speed, accuracy increases with the number of default boundary boxes. SSD detector has more classification errors when compared to R-CNN but low localization error while dealing with similar categories.

- RetinaNet

Single Shot MultiBox Detector (SSD) achieves better accuracy when applied over a dense sampling of object locations, aspect ratios, and scales. One of the challenges with the SSD application is that it achieves a 10-20% lower AP, whereas YOLO focuses on an even more extreme speed/accuracy trade-off. Tsung-Yi Lin has implemented RetinaNet to improvise on the SSD and YOLO drawbacks. It can match the speed of previous one-stage detectors while surpassing the accuracy of all existing state-of-the-art two-stage detectors. In RetinaNet, the proposed focal loss allows the training of highly accurate dense object detectors in the presence of vast numbers of misclassified examples. On MS-COCO 2017 following observations have been found:- ResNet-101-FPN can generate AP of 39.1 when a threshold is not fixed, AP of 59.1 at IoU threshold fixed at 50%, and AP of 42.3 at IoU threshold fixed at 70%.

- ResNeXt-101-FPN can generate AP of 40.8 when s threshold is not fixed, AP of 61.1at IoU threshold fixed at 50%, and AP of 44.1at IoU threshold fixed at 70%.

- SqueezeDet

Bichen Wu has proposed SqueezeDet, a small model size, and a better energy-efficient model. The main motive behind the development of this model was high accuracy, real-time inference speed, small model size, and energy efficiency for autonomous driving. In the first stage, the detector uses stacked convolution filters to gain a high-dimensional, low-resolution feature map for the input image. The second stage utilizes ConvDet, a convolutional layer, to accept the feature map as input, compute a large amount of object bounding boxes, predict their categories, and filter these bounding boxes to obtain final detections. SqueezeNet is the backbone architecture to build SqueezeDet, which attains the accuracy of the AlexNet level with a model size of <8 MB. It consists of approximately 2 million trainable parameters and attains a higher accuracy level than VGG19 and ResNet-50, with 143 million and 25 million parameters. On NVIDIA TITAN X GPUs, on the model are the following observations:

SqueezeDet – 76.7 mAP with 57.2 FPS

SqueezeDet+ – 80.4 mAP with 32.1 FPS - CornerNet

Hei Law has proposed the CornerNet for object detection, wherein the object is detected by a pair of unique points using a CNN instead of drawing an anchor box around the detected object. The need for designing anchor boxes is eliminated, which is usually used in one-stage detectors to predict the objects as paired key points, i.e., top-left and top-right corners. It has introduced corner pooling, a new version of the pooling layer that helps the network better localize corners. It uses a single convolutional network to forecast a heatmap for the top-left corners of all instances of the same object category, a heatmap for all bottom-right corners, and an embedding vector for each detected corner. On the MS-COCO dataset, CornerNet has achieved 42.2% AP which outperforms the existing one-stage detectors.

Classification-Based Object Detection (Two-stage)

Classification-Based Object Detection (Two-stage)

- Region-Based Convolutional Neural Network (R-CNN)

Ross Girshick has proposed the region-based convolutional neural network, with the main focus on a simple and scalable detection algorithm that improves mean average precision (mAP) by more than 30% relative to the previous best result on VOC 2012 – achieving an mAP of 53.3%. The method uses two main points: first, the application of high-capacity convolutional neural networks (CNNs) to bottom-up region proposals to localize and segment objects and second, when labeled training data is scarce, supervised pre-training for an auxiliary task, followed by domain-specific fine-tuning, yields a significant performance boost.Each object region proposal goes through rescaling to transform into a fixed image size and then applied to the CNN model on a pre-trained ImageNet, i.e., AlexNet for feature extraction. A support vector machine (SVM) algorithm detects the object within each region proposal and helps in identifying object detected classes. One of the main drawbacks is that it consumes more time to train the network, as we need to classify 2000 object region proposals per image.

- Spatial Pyramid Pooling Network (SPP-net)

Kaiming He has proposed SPPNet, to generate a fixed-length representation regardless of image size/scale as pyramid pooling can strongly support object deformations to improve all CNN-based image classification. SPP-net can compute the feature maps only once from an entire image, and it pools features in arbitrary regions (also called sub-images) produces fixed-length representations for training. The detectors can help to avoid frequent computation of the convolutional features. SPP-net has achieved detection accuracy of 59.2% mAP on the Pascal VOC 2007. Some of the drawbacks of SPP-net are multi-stage training, fully-convolutional (FC) layers fine-tuning, and it ignores earlier layers. The above challenge can overcome by the Fast R-CNN. - Fast Region Convolutional Neural Network (Fast R-CNN)

R. Girshick has proposed a Fast R-CNN detector and is an improvement of SPPNet and R-CNN. It is fast to train and test and has higher detection quality (mAP) than R-CNN, SPPnet training can update all network layers, and no disk storage is required for feature caching. Fast R-CNN architecture. An input image and multiple regions of interest (ROIs) areinput into a fully convolutional network. Each RoI is pooled into a fixed-size feature map and then mapped to a feature vector by fully connected layers (FCs). The network has two output vectors per region of interest: softmax probabilities and per-class bounding-box regression offsets. The architecture is trained end-to-end with a multi-task loss. The method has achieved 66% mAP detection accuracy on PASCAL VOC 2012 compared to the 62% mAP for R-CNN. - Faster Region Convolutional Neural Network (Faster R-CNN)

Shaoqing Ren has proposed Faster R-CNN to overcome the drawbacks of Fast R-CNN and SPP-net. It has reduced the running time of these detection networks, exposing region proposal computation as a bottleneck. The method has introduced Region Proposal Network (RPN) that shares full-image convolutional features with the detection network, thus allowing nearly cost-free region proposals. At the initial level, first, the region of interest is performed, and then, the pooled area is transferred to the CNN and two FC layers for softmax classification and the bounding box regressor. On the MS-COCO dataset, the method has achieved mAP = 42.7%, VOC-2012, mAP = 70.4%; 5 FPS with the deep VGG-16 model and 17 FPS with the ZF net. - Feature Pyramid Networks (FPN)

Tsung-Yi Lin has proposed FPN method is built on a multi-scale, pyramidal hierarchy of deep convolutional networks to construct feature pyramids with marginal extra cost. The features in CNN deep layers are helpful but are not conducive for object localization in category recognition. For object detection, either Fast R-CNN, Faster R-CNN, and single-shot multi-box detector (SSD) to be implemented to support FPN. FPN follows top-down pathway architecture and lateral connections while constructing high-level semantics at all scales. The method has improved small object detection performance to object scale variation. The implementation of Faster R-CNN on FPN with the backbone of ResNet-101 has achieved detection accuracy of 59.1 mAP on the MS-COCO dataset. - Mask R-CNN

Kaiming He has proposed the Mask R-CNN method. It is an extension of Faster R-CNN, with the ability to run at 5 FPS. For generalizing other tasks apply the Mask R-CNN method, e.g., to estimate human poses in the same framework. It utilizes a two-stage procedure where the first stage is similar to RPN and the second stage runs parallelly to predicting the class and box offset. The Mask R-CNN also outputs a binary mask for each region of interest. It can solve computer vision instance problems such as distinct objects in an image or a video. It also extends Faster R-CNN by including a branch for predicting segmentation masks on each Region of Interest (RoI), in parallel with the existing branch for classification and bounding box regression. Mask R-CNN include Faster R-CNN two output for each detected object, a class label, and a bounding-box offset; and the third branch is added to that output the object mask. It is conceptually easy to train and flexible, and it is a general framework to build instance segmentation of objects. The method uses ResNet-FPN as its backbone model for extracting features. - U-Net

Olaf Ronneberger has proposed the U-Net architecture. The U-Net architecture is developed primarily on image segmentation, and it utilizes a fully convolutional network (FCN) and encoder-decoder architecture. The architecture consists of two stages. The first stage is the contracting path, also known as the encoder/ the analysis path, and it is similar to a regular convolution network and provides classification information. The second stage is an expansion path, also known as the decoder/ the synthesis path, consisting of up-convolutions and concatenations with features from the contracting path. This expansion allows the network to learn localized classification information. Additionally, the expansion path can also increase the resolution of the output, which can be passed onto a final convolutional layer to create a fully segmented image. The resulting network is almost symmetrical, giving it a u-like shape. There are various extension models developed for different types of images, for example, U-Net architecture for 3D images, a nested U-Net architecture and many more. The U-Net architecture requires very few annotated images to develop the model, but it lags from Mask R-CNN on precision.

Various Object Detection Method Performance on Pascal Titan X GPU for MS-COCO and Pascal-VOC 2007

| Sr. No. | Architecture | mAP (MS-COCO) |

mAP (Pascal-VOC 2007) |

FPS |

|---|---|---|---|---|

| 1 | YOLOv3 | 33.00% | – | 35 |

| 2 | YOLOv4 | 43.5% | 33 | |

| 3 | SSD | 31.20% | 78.80% | 8 |

| 4 | SqueezeDet | – | – | 57.2 |

| 5 | SqueezeDet+ | – | – | 32.1 |

| 6 | CornerNet | 69.2% | – | 4 |

| 7 | RCNN | – | 66% | 0.1 |

| 8 | SPPNet | – | 63.10% | 1 |

| 9 | Fast RCNN | 35.90% | 70.00% | 0.5 |

| 10 | Faster RCNN | 36.20% | 73.20% | 6 |

| 11 | Mask RCNN | – | 78.20% | 5 |

The CNN-based detectors have achieved better results in object detection. However, the above algorithms suffer several limitations. For example, the two-stage algorithms require more computational power, and hence the cost is associated with it. It also yields bounding boxes that are not precise and may contain more than one object. Usually, these challenges arise in images with overlapping and small objects. From the above study, use cases can influence the selection of algorithm in case of a relatively small dataset and does not require real-time results as it is good to utilize anyone of the second-stage detection algorithms, whereas it is good to work with single-stage detection algorithms for the live video feed.

Conclusion

Conclusion

Despite progress in the object detection field, some existing techniques are now part of many industrial applications (robotic vision, autonomous driving, industrial automation, human-computer interaction (HCI), package inspection, damage detection, and product and components Assembly). Still, the technology remains significantly far away from human vision while addressing real-world challenges.

A few of the object detection applications include: detecting damaged product/package, keeping factory worker safe, adhering strictly to the standards help to improve the productivity of the assembly line, sense its environment, and move safely with little or no human input. In healthcare, the rapid development of new and upgraded versions of the current architecture has led to new heights in the image segmentation area. The usage areas on medical images are MRI, CT scan, retinal fundus, microscopy and ultrasound and many more. The various usage in medical applications is the brain, pathology, cardiovascular, eye, liver, skin lesions and many more. The application of U-Net architecture is more in the healthcare field, but the Mask R-CNN is gaining ground.

Contributors

Arvind Ramachandra

Vice President of Technology & Cloud Services

Munish Singh

Lead, Research & Advisory AI/ML

About Innova Solutions

Innova Solutions is a leading global information technology services and consulting organization with 30,000+ employees and has been serving businesses across industries since 1998. A trusted partner to both mid-market and Fortune 500 clients globally, Innova Solutions has been instrumental in each of their unique digital transformation journeys. Our extensive industry-specific expertise and passion for innovation have helped clients envision, build, scale, and run their businesses more efficiently.

We have a proven track record of developing large and complex software and technology solutions for Fortune 500 clients across industries such as Retail, Healthcare & Lifesciences, Manufacturing, Financial Services, Telecom and more. We enable our customers to achieve a digital competitive advantage through flexible and global delivery models, agile methodologies, and battle-proven frameworks. Headquartered in Duluth, GA, and with several locations across North and South America, Europe and the Asia-Pacific regions, Innova Solutions specializes in 360-degree digital transformation and IT consulting services.

For more information, please reach us at – [email protected]

Your content goes here. Edit or remove this text inline or in the module Content settings. You can also style every aspect of this content in the module Design settings and even apply custom CSS to this text in the module Advanced settings.